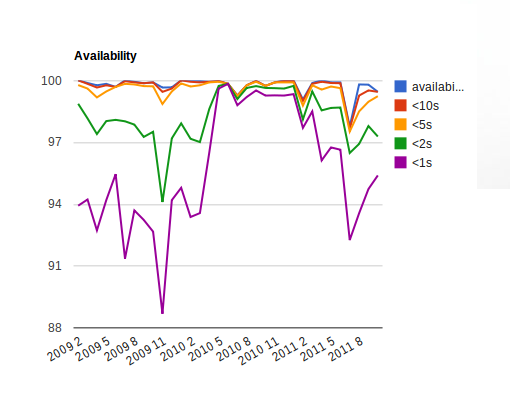

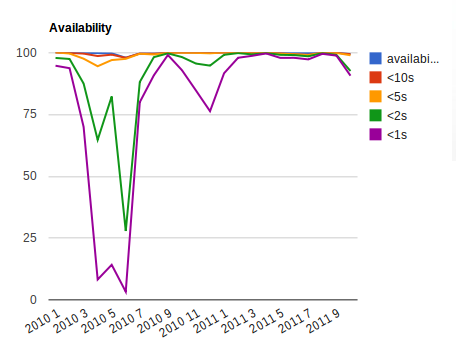

I'm tracking current project state using automated test suite that is executed 24/7. It gives information about stability by randomly exploring project state space. Test launches are based on fresh builds from auto-build system connected to master branch for this project.

Recently I hit few times typical stability regression scenario: N+1th commit that should not have unexpected side effects caused crash in auto-testing suite just after 2 minutes of random testing thus blocking full test suite to be executed. OK, we finally had feedback (from auto-test), but let's compute the (bug .. detection) delay here:

- auto-build phase: 30 minutes .. 1 hour (we build few branches in cycle, sometimes build can wait long time to be performed especially if build cache has to be refilled for some reason)

- test queue wait phase: 30 minutes (pending tests should be finished before loading new version)

- review of testing results (currently manual): ~1 hour (we send automatic reports, but only twice a day)

In worst scenario we may notice the problem (regression) next day! Too long time in my opinion - broken master branch state may block other developers thus slowing down their work. There must be better organization that will allow to react faster on such problems:

- Ask developers to launch random test suite locally before every commit. 5 minute automatic test should eliminate obvious crashes from publishing on shared master branch

- Auto-Notify all developers about every failed test: still need to wait for build/test queue, messages may become ignored after long time

- Employ automatic gate keeper-like mechanism that will promote (merge) commit after some sanity tests are passed

Looks like 1. is more efficient and easiest to implement option. 2. is too "spammy" and 3. is hardest to setup (probably in next project with language with ready support for "commit promotion").

- will look interesting if applied to latest submitters only. Maybe I'll give it a try.