Good software developers are lazy persons. They know that any work that can be automated should be automated. They have tools and skills to find automated (or partially automated) solution for many boring tasks. As a bonus only the most interesting tasks (that involve high creativity) remain for them.

Good software developers are lazy persons. They know that any work that can be automated should be automated. They have tools and skills to find automated (or partially automated) solution for many boring tasks. As a bonus only the most interesting tasks (that involve high creativity) remain for them.

I think I'm lazy ;-). I hope it's making my software development better because I like to hire computer for additional "things" apart from typewriter and build services. If you are reading this blog probably you are doing the same. Today I'd like to focus on C++ build process example.

The problem

If you're programmer with Java background C++ may looks a bit strange. Interfaces are prepared in textual "header" files that are included by client code and are processed together with client code by a compiler, .o files are generated. At second stage linker collects all object files (.o) and generates executable (in simplest scenario). If there are missing symbols in header file compiler raises an error, if symbol is missing on linking stage linker will complain.

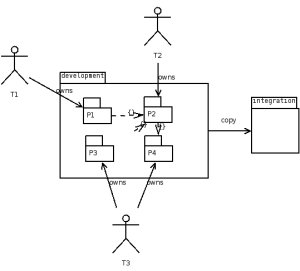

On recent project my team was responsible for developing a C++ library (header and library files). This C++ library will be used then by another teams to deliver final product. Functionality was defined by header files. We started development using (automated by CPPUnitLite) unit tests then first delivery was released.

When other teams started to call our library we discovered that some method implementations were missing! It's possible to build a binary with some methods undefined in *.cpp files as long as you are not calling them. When you call that method from source code linker will find undefined symbol.

I raised that problem on our daily stand-up meeting and got the following suggestion: we have to compare symbols stored in library files (*.a, implementation) with method signatures from public *.h files (specification). Looks hard?

The solution

It's not very hard. First: collect all symbols from library files, strip method arguments for easier parsing:

nm -C ./path/*.a | awk '$2=="T" { sub(/\(.*/, "", $3); print $3; } '\

| sort | uniq > $NMSYMS

Then: scan header files using some magic regular expressions and a bit of AWK programming language:

$1 == "class" {

gsub(/:/, "", $2)

CLASS=$2

}

/^ *}/ {

CLASS = ""

}

/\/\*/,/\*\// {

next

}

buffer {

buffer = buffer " " $0

if (/;/) {

print_func(buffer)

buffer = ""

}

next

}

function print_func(s) {

if (s ~ /= *0 *;/) {

# pure virtual function - skip

return

}

if (s ~ /{ *}/) {

# inline method - skip

return

}

sub(/ *\(.*/, "", s);

gsub(/\t/, "", s)

gsub(/^ */, "", s);

gsub(/ *[^ ][^ ]* */, "", s);

print CLASS "::" s

}

CLASS && /[a-zA-Z0-9] *\(/ && !/^ *\/\// && !/= *0/ && !/{$/ && !/\/\// {

if (!/;/) {

buffer = $0;

next;

}

print_func($0)

}

Then make above script work:

awk -f scripts/scan-headers.awk api/headers/*.h | sort | uniq > $HSYMS

Now we have two files with sorted symbols that looks like those entries:

ClassName::methodName1

ClassName2::~ClassName2

Let's check if there are methods that are undefined:

diff $HSYMS $NMSYMS | grep '^<'

You will see all found cases. Voila!

Limitations

Of course, selected soultion has some limitations:

- header files are parsed by regexp, fancy syntax (preprocessor tricks) will make it unusable

- argument types (that count in method signature) are simply ignored

but having any results are better that have no analysis tool in place IMHO.

I found very interesting case by using this tool: an implementation for one method was defined in *.cpp file but resulting *.o file was merged into private *.a library. This way public *.a library has still this method missing! It's good to find such bug before customer do.

Conclusions

I spent over one hour on developing this micro tool, but saved many hours of manual analysis of source code and expected many bug reports (it's very easy to miss something when codebase is big).

Sometimes you have to update many files at once. If you are using an IDE there is option during replace "search in all files". I'll show you how make those massive changes faster, using just Linux command line. Place this file in ~/bin/repl

Sometimes you have to update many files at once. If you are using an IDE there is option during replace "search in all files". I'll show you how make those massive changes faster, using just Linux command line. Place this file in ~/bin/repl