Continuous Integration is a great thing. Allows you to monitor your project state on a commit-by-commit basis. Every build failure is monitored easily.

Continuous Integration is a great thing. Allows you to monitor your project state on a commit-by-commit basis. Every build failure is monitored easily.

If you connect your unit and integration tests properly also tell your runtime properties of the project, for example:

- Does it boot properly?

- Doesn't it crash in 1st 5 minutes?

- Are all unit tests 100% green?

- Are all static tests (think: FindBugs, lclint, pylint, ...) free of selected defect types?

The implementation:

- you encourage your team to push changes frequently to main development branch (having high quality unit testing suite you can even skip topic branches policy)

- you setup some kind of Continuous Integration tool to scan all the repositories and detect new changes automatically

- for each such change new build is started and tests are carries out automatically

- all the build artefacts (including tests outcomes) are collected

- the teams are notified by e-mail about build/test failures in order to allow them to carry out fixes quickly

OK, so we have outlined the plan above. Let's dig into details for every step. I'll use the most popular tool used named Hudson/Jenkins as implementation tool (there are two projects, but they're, actually, the same tool).

I'm going to address all the features I expect from continuous integration system (based on my current experience with other CI systems).

The installation

Hudson can be downloaded from http://hudson-ci.org/ site as single war file. The installation is trivial as you could run it by JRE-7 compatible java:

java -jar hudson-3.3.2.war

Then you open your Hudson console in your browser by: http://SERVER_IP:8080 and that's it! It's installed and ready for the job!

Basic build job specification (manual)

In order Hudson to know how to build the project you need (it's easiest) to manually checkout project workspace somewhere on the build server. Let's assume it's "/home/darek/src/cmake". I have some test project there connected to GIT version control system. I am able to issue git commands there and have access to GIT server to pull new commits.

You need to select "New Job" link and fill the following fields:

- Task Name: I suggest to use name without spaces (to be able to use it in file names without problems), for example: "ProjectGamma"

- "Build a free-style software job" - as it's C++ project let's design it from scratch

After creating the project you can fill the properties of new project, I'll list those you need probably to change:

- "Advanced Job Options" / "Use custom workspace" = "/home/darek/src/cmake"; you tell Hudson to change to this directory before the build

- "Discard Old Builds" / "Max # of builds to keep" = 100 (choose depending on your build output and available space on build machine)

- "Add build step" / "Execute shell" / "Command" = "git pull && make clean && make"

- "Post-build Actions" / "Archive the artifacts" fill the field with executable name, in my case it's "SampleCmake"

- Save

Then it's time to click "Build now" link / icon. New build is started and finished. We can see the console output from the process and can download the build output (the "SampleCmake" binary).

To summarize: at this point we have the following functionality covered:

- Launch a build manually based on fresh commits from GIT repository

- Observe the build status

- Download generated artefacts

Record the exact version (GIT commit)

It's very important to save the commit ID somewhere in order to know what state is represented by the build. In my project I used to collect that information in a file called "versions.txt" (it's a file because usually there are many repositories involved). I'm going to collect there GIT sha used for the build and short commit description (very useful if you want to quickly see the state).

In order to achieve that you add another build step and enter:

git log --decorate --pretty=oneline --abbrev-commit > versions.txt

And change your "Archive the artifacts" line to:

SampleCmake,versions.txt

After the build you can see versions.txt available in the build artefacts.

Trigger builds automatically

Next step is the automation. You need to trigger the build as soon as new version is available for pull from GIT.

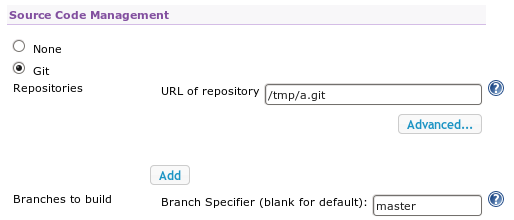

First of all, you need the GIT plugin: "Manage Hudson" / "Manage Plugins" / "Available" / "Featured" / "Hudson GIT plugin".

Then (after Hudson restart) new options are available in build job configuration:

(I've added local GIT repository placed under /tmp/a.git, for real deployments you need remote URL for git repository).

(I've added local GIT repository placed under /tmp/a.git, for real deployments you need remote URL for git repository).

Additionally you need to tell the frequency of new version checks (Build Triggers / Poll it would be detected SCM): "* * * * *" means check every minute.

And, finally, you need to remove manual "git pull" step from the build configuration as the plugin takes care of that.

Then once you add new commit and new build would be started automatically.