About This Site

Software development stuff

Archive

- 2017

- 2016

- 2015

- 2014

- 2013

- 2012

- 2011

- 2010

- 2009

- 2008

- 2007

|

Entries from February 2010.

Thu, 18 Feb 2010 11:38:23 +0000

Interesting error found on Oracle XE installed for one of projects. After a run of test case that uses JDBC to perform some operations on database all tests started to fail with this exception:

Caused by: java.sql.SQLException: ORA-00600: internal error code, arguments:\

[kdsgrp1], [], [], [], [], [], [], []

at oracle.jdbc.driver.DatabaseError.throwSqlException(DatabaseError.java:112)

at oracle.jdbc.driver.T4CTTIoer.processError(T4CTTIoer.java:331)

at oracle.jdbc.driver.T4CTTIoer.processError(T4CTTIoer.java:288)

at oracle.jdbc.driver.T4C8Oall.receive(T4C8Oall.java:743)

at oracle.jdbc.driver.T4CPreparedStatement.doOall8(T4CPreparedStatement.java:216)

at oracle.jdbc.driver.T4CPreparedStatement.executeForRows(T4CPreparedStatement.java:955)

at oracle.jdbc.driver.OracleStatement.executeMaybeDescribe(OracleStatement.java:1062)

Restart of Oracle database did not work. Seems table space was broken. I rebuit the database (DROP, then CREATE) and error dissapeared.

Interesting ...

Wed, 17 Feb 2010 22:22:32 +0000

What's the purpose of internal project documentation? To help people do their jobs. Developers need the knowledge to be distributed across the team, testers need definition of proper system behaviour, marketing needs information on product features to sell it. What's the purpose of internal project documentation? To help people do their jobs. Developers need the knowledge to be distributed across the team, testers need definition of proper system behaviour, marketing needs information on product features to sell it.

Questions

Important knowledge that may be required by developers doing updates may be summarized in few sentences:

- Who changed recently that line of code?

- When this method have been changed?

- Why algorithm works that way?

There's simple method of automatically saving and retrieving this kind of information: Subversion (or any other version control system). How?

Answers

There's nice feature of version control system that is not the most frequent used but is very useful: annotation/blame. This special view shows you for a file:

- Who changed this line?

- When this line was changed?

- Revision number of commit => Log entry => Bug tracker task ID => rationale (Why)

After locating such information you may have better understanding of source code.

How to check annotation using different tools:

- svn annotate filename

- git annotate filename

- bzr annotate filename

- Eclipse: Team / Show Annotation

The problem

Looks simple, but there's a "quirk" here. If you are doing massive code changes (to enforce n+1th coding standard) you are overwriting original source code authors and information. Thus annotation (and log) becomes useless.

That's why I'm asking you:

Do not reformat whole files on commit, PLEASE!

Mon, 15 Feb 2010 22:48:35 +0000

I just got an email from my monitoring system:

Site: http://twitter.com is down ('502 Bad Gateway')

Looked at main Twitter page and saw this picture:

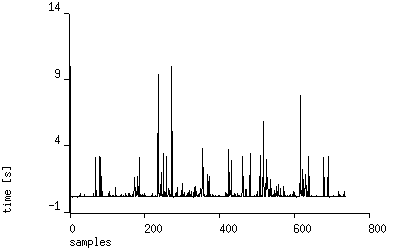

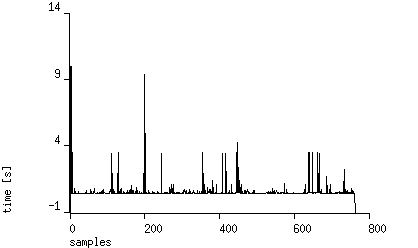

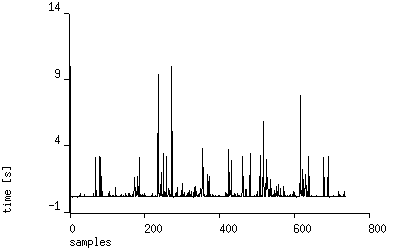

I checked that Twitter responds very slowly and randomly shows "over capacity" page. You can see details on site-uptime public report. Here's response graph from last days (measure interval 15 minutes, HEAD requests):

UPDATE (2010-04-15): after few weeks the situation is getting worse:

Sat, 13 Feb 2010 16:44:04 +0000

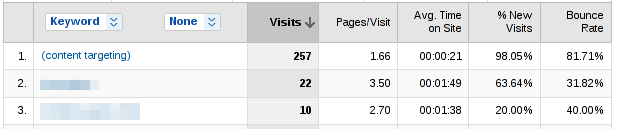

Google AdWords is popular marketing tool that allows you to attract customer attention. You "pay per click" - that means if potential customer is directed to your page (or e-commerce site) then you will pay.

There are two main variations of this tool:

- matching advert is located based on keywords entered by user in Google search

- matching advert is located based on content of a partner page (AdSense partner)

It seems both methods (keyword and content-based) are equal efficient, but wait: there's a big difference. When user is searching for some keywords it's very likely he will be interested in your product/service. When user is coming from AdSense page he may be directed to this page for different reason.

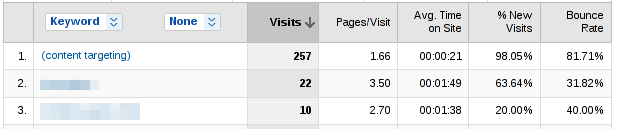

I used both methods from some time and got the following results from Analytics (Analytics / Traffic Sources / AdWords / AdWords Campaigns / ... / (content targetting)):

As you can see most traffic comes from "content targetting" (partner sites). Best keyword gave 10 times less visitors. But: see "Bounce Rate" and "Avg. Time on Site" parameters. >80% of visitors exited immedietly from my site. Keyword-based targetting gave better results: lower bounce rate and longer time spent on site. As you can see most traffic comes from "content targetting" (partner sites). Best keyword gave 10 times less visitors. But: see "Bounce Rate" and "Avg. Time on Site" parameters. >80% of visitors exited immedietly from my site. Keyword-based targetting gave better results: lower bounce rate and longer time spent on site.

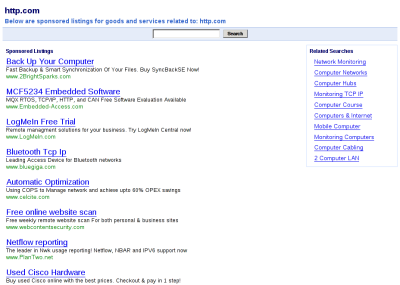

Let's see how the best "partner site" (AdWords / Networks / Managed placements - off show details / ) looks like:

It's a "trash site" - highly positioned site (often with "stolen" content) focused on AdSense monetization. That's why visitor quality from that kind of sites if very low (high bounce rate). It's a "trash site" - highly positioned site (often with "stolen" content) focused on AdSense monetization. That's why visitor quality from that kind of sites if very low (high bounce rate).

Are your visitors coming from a "trash site"?

Fri, 12 Feb 2010 22:42:58 +0000

Recently I've registered an account on twitterfeed.com site that forwards blog RSS-es to Twitter and Facebook accounts. Headers of incoming mail attracted my attention:

Return-Path: root@mentiaa1.memset.net

(...)

Received: from mentiaa1.memset.net (mentiaa1.memset.net [89.200.137.108])

by mx.google.com with ESMTP id 11si7984998ywh.80.2010.02.12.06.24.18;

(...)

Received: (from root@localhost)

by mentiaa1.memset.net (8.13.8/8.13.8/Submit) id o1CERcGq004355;

(...)

From: noreply@twitterfeed.com

(...)

Interesting parts are bolded out. As you can see registering e-mail was sent from root account. Probably the same user id is used for WWW application. That means if you break the WWW application you can gain control over whole server.

The preferred way to implement WWW services is to use account that has low privileges (www-data in Debian) because breaking the service will not threat whole server.

Thu, 11 Feb 2010 00:13:07 +0000

J2EE is a set of Java-based technologies used to build applications accessible over the network. Flexibility and the ability to configure, based mainly on XML files, leads to the fact that more and more errors are beyond the control of the compiler.

Older systems described the interaction between parts of the system directly in the code and as such could you checked (at least partially) in the build phase by compiler. The trend to move this kind of information outside of Java code causes, unfortunately, many configuration errors will not be caught by compiler. Those errors will be visible later, at run time.

The complexity of the J2EE architecture makes the cycle (recompilation, installation, testing in a browser) long. This significantly makes the development method "change and run" harder. What can we do to speed up the process of developing J2EE applications?

(This is a translation of an article I wrote two years ago on static verification).

Static Verification

Static verification of software means "correctness proof" for average developer. The aim is to catch errors hidden in the system without actually running it. It should (theoretically) find all implementation bugs before testing phase. However, this idea contains some serious problems:

- In practice, writing a formal specification of functionality is hard (mathematical background required)

- Tools for verification are difficult to use and require a lot of practice for productive use

- It's not possible to automatically checked against a formal specification, human intervention is required

As you can see the complete static verification is not feasible in typical systems. But can we take some benefits from the static verification ideas?

Resources in J2EE

Let's define "resource" term for the purpose of this article. It's an element of a J2EE application that can be analysed independently, and which relates to other resources after installation in the application server. An example of this resource is Java code, analysis is performed by the compiler during compilation. Example of such "resources":

- Java code (no access regular expressions, the mechanism of reflection)

- Struts configuration (no access: the SAX parser)

- JSP files (no access: the SGML parser)

- Setting up Tiles (no access: the SAX parser)

- Translation in ApplicationResources_

- *. properties (the standard Java library)

- ...

Example: Struts static verification

What benefits can be achieved by statically and automatically analysis of above resource? Here are some common errors in J2EE/Struts applications, typically discovered after deployment:

- Exception caused by using a page tag html: text property to the value of an attribute that does not exist as an attribute in the form-bean

- Typo in the action attribute in the tag html: form, which causes confusion in the absence of a URL

- No translation of static text appearing on the tag bean: message

- Improper use of the name attribute in one of the tag causes an runtime exception

Finding such errors, especially when the project is subject to constant scope changes is inefficient and tiring. Wouldn't it be easier to get a complete list of these errors within a few seconds before deployment (instead of wasting time testers such obvious defects)?

I believe that the tester's job is to check compliance with specification, simple errors and typos should no longer appear in testing phase. How can we statically "catch" class of errors described above?

- JUnit test that will match form-bean definitions (POJO classes) with html: text tags and check if properties called from JSP are present in Java class

- Actions called from JSP can be compared to definitions in XML file

- For each instance of the bean: message we can check whether there is an entry in the messages file

- Every "name" used in JSP can be checked against bean list from Struts config file

Implementations

I was able to apply similar techniques for the following software configurations:

- JSF: Java and JSP parsing based on regular expressions, the parser and verifier written in AWK

- Struts: SGML parser for the JSP plus Java reflection API

- Hibernate: static analysis of query parameters in the HQL vs bean class that hold query parameters

Also few implementations from outside Java world:

- Zope ZPT templates: XML parser plus Python reflection API

- Custom regexp-based rules checked against Python code

Summary

Static bug hunting means: early (before runtime) and with high coverage (not available by manual testing). It saves a lot of effort to track simple bugs and leave time for testers to do real work (check against specification).

Wed, 10 Feb 2010 09:41:37 +0000

"ORA-01722: invalid number" is raised when a number was passed a query parameter, but another type (string) was expected.

The error message isn't very helpful, is it?

Mon, 08 Feb 2010 22:56:06 +0000

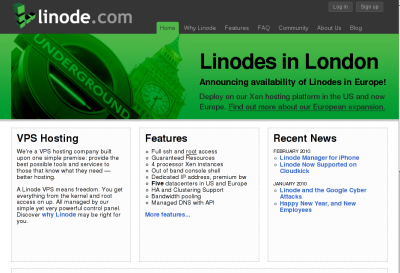

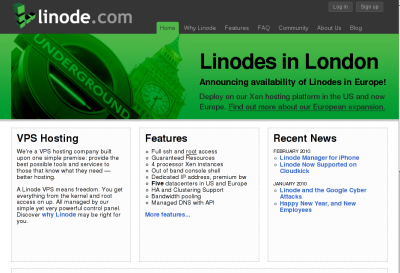

Linode.com sells unmanaged VPS servers based on Xen virtualisation technology. Linode.com sells unmanaged VPS servers based on Xen virtualisation technology.

VPS stands for Virtual Private Server and it means one phisycal server is split into smaller parts and every "part" gains his own IP, disk space, CPU and IO resources. You are sharing server resources with other VPS-es, but have much better isolation and customisation than shared hosting supports.

Off-topic note: Besides there's management panel for Linode you should know Linux/Unix administration very well to make use of "unmanaged VPS". If you are not advanced administrator better stay with shared hosting or buy "managed VPS". It will save you many headaches later. VPS (like dedicated server) is not a "piece of cake" for novice webmaster. You've been warned.

It's a review written after one year use of Linode services for one of my customers, Cartalia.com.

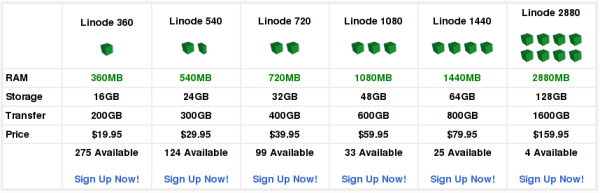

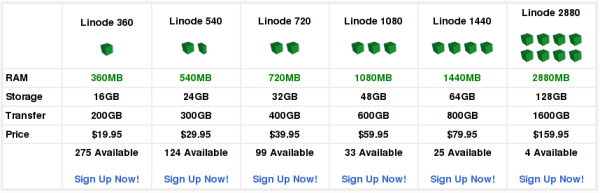

Plans

Linode is not the most expensive VPS provider out here. Plans start from 20 USD/mo for 360 MB of RAM that is sufficient for many typical web applications.

I selected lowest plan, Linode 360.

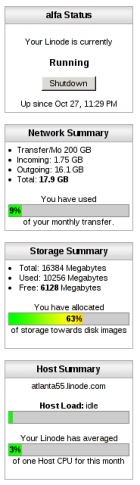

Panel

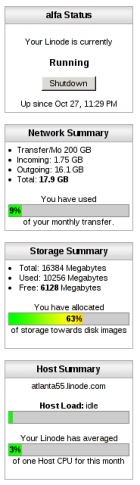

Linode uses developed in house, powerful VPS panel that allows: Linode uses developed in house, powerful VPS panel that allows:

- start/stop/reboot VPS

- manage DNS records

- manage disk space (make, resize partitions), etc.

- see statistics (IO/net/CPU usage)

- manage support tickets

- buy add-ons (additional IPs, memory, disk space)

- pay for services (by credit card)

You can also create many user accounts and assign them permissions (for example to manage one DNS domain). It's very useful if you want split administration between many persons.

DNS record management is intuitive. Of course you can check your assigned IP addresses and set Rev DNS for your server.

Very interesting option is a Lish console. This mechanism allows you to login your VPS after you messed up with firewall settings. You can compare this access channel to have physical keyboard and monitor attached to machine. Lish comes in two forms:

- AJAX web-based console

- SSH server

Personally I prefer SSH access (faster). Access to Lish console can be set using passwords or public keys (RSA for instance). Key-based login eliminates password entry every time you want to access machine.

Ticket system is very simple, but effective (and the most important: someone's behind and responds fast). I used it very occasionally:

- switch billing scheme from montly to hourly

- 1 issue with panel (missing IO graph)

- 2 issues with VPS (not accessible)

All requests were resolved quickly and I'm satisfied with the service.

Platform

It's a Xen, so:

- your filesystem is exclusively yours (no inode limits as in OpenVZ)

- memory assigned is truly yours (no burst/fake ram on swap partition as in OpenVZ)

- dedicated SWAP is present

Linode offers 32-bit guest systems, that means: lower memory usage than 64 bit. You can safely stick to 32-bit version if your memory requirements are lower than 4 GB (If they are bigger why bothering with VPS-es?).

Linode offers the following locations (I bolded out locations that are best for Europe customers):

- London, GB, UK (new)

- Newark, NJ, USA

- Atlanta, GA, USA

- Dallas, TX, USA

- Fremont, CA, USA

I'm using Newark and Atlanta locations (London was not available one year ago). Both seems to be pretty stable.

Reliability

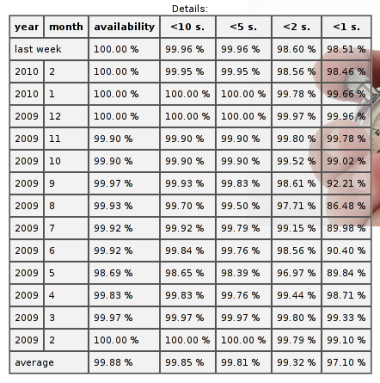

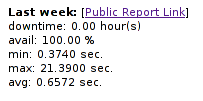

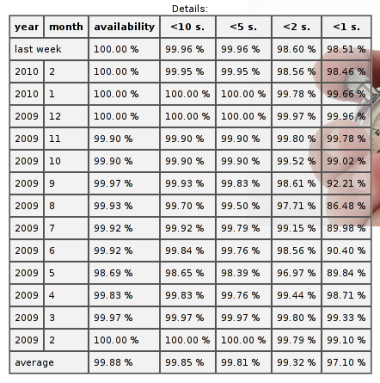

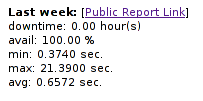

It's accessibility measured by site-uptime.net of one of websites hosted with Linode (details).

As you can see availability is really good.

Summary

I rate this service "A" grade.

Sat, 06 Feb 2010 23:00:26 +0000

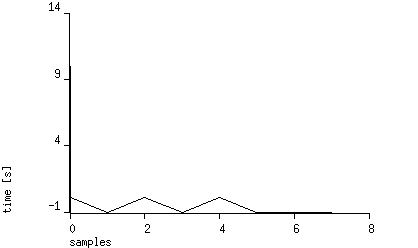

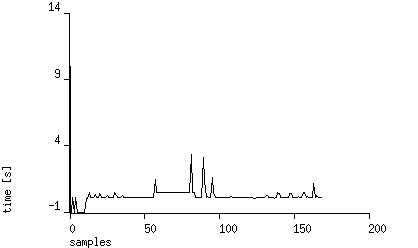

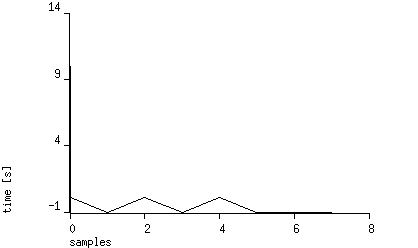

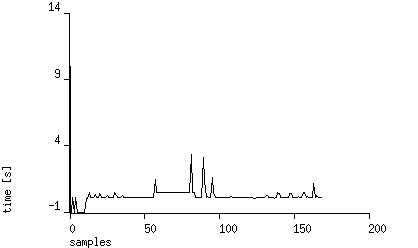

Próbowałem dziś zalogować się na GL i zauważyłem, że serwis nie działa tak jak powinien (częste "timeouty" w przeglądarce). Postanowiłem przyjrzeć sie bliżej działaniu serwera i założyłem monitor na niego przy użyciu site-uptime.net (interwał 15 minut, metoda: HEAD HTTP/1.0). Oto rezultat po dwóch godzinach monitorowania (ujemna wartość oznacza brak dostępności serwera):

Bardzo ciekawe. Po kolejnej próbie otwarcia strony dostaję następujący komunikat serwowany z url-a: http://www.goldenline.pl/offline.html:

Przerwa zapewne jest planowa, oczywiście obiecuję trzymać kciuki za obsługę portalu ;-) Przerwa zapewne jest planowa, oczywiście obiecuję trzymać kciuki za obsługę portalu ;-)

Oto co zobaczyłem w środku pliku HTML:

<a href="http://www.goldenline.pl"><img src="logo_big.gif" alt="Praca w GoldenLine" /></a>

Czytaj "mamy problemy ze stabilnością, być może jesteś kompetentnym adminem, żeby nam w tym pomóc, zapraszamy" ;-). W innym:

<!--<strong>GL wraca o 05:00 jeszcze szybszy ;)</strong>-->

Dość duże to okno serwisowe ;-)

UPDATE (2010-02-08): sytuacja została opanowana przed 5-tą jak widać na wykresie:

Sat, 06 Feb 2010 22:33:06 +0000

Aplikacja.info believes in continuous integration and frequent reases, so uses so-called stable trunk policy. That means: at any time any developer can make installation from trunk to production systems. How stable trunk can be achieved? Aplikacja.info believes in continuous integration and frequent reases, so uses so-called stable trunk policy. That means: at any time any developer can make installation from trunk to production systems. How stable trunk can be achieved?

- any commit done to trunk is protected by set of automated tests that should run 100% clean (more critical project will use framework for a continuous build process like Cruise Control or PQM).

- "risky" tasks are done using peer code review process based on version control software

What to review?

We believe the most efficient method of reviewing code is to look at changesets (unified diffs). Then reviewer can see exact changes done by another developer to meet task criteria and codebase to review is minimized to really important parts of code (changes with some lines of context). That's why we believe forming proper changesets is very important requirement.

Commit methods

There are mainly three methods of inserting new commits into trunk (specified in our implementation by special "target" field in bug tracker):

- Direct commit (target=master) without code review: used mainly for simplest functionality without much risk involved. Minimal administration burden, minimal merge conflict.

- Patch Based Review (target=patch) using PMS functionality for one-way code review: in this scenario only one developer is supposed to modify patch, another one is only reviewing. At least two developers are involved, merge conflict minimized by basing on trunk (patch is always re-based on trunk during development).

- Shared branch (target=branch) using VCS: Development is branched on separate branch and reviewved on this branch. Many developers can commit on this branch/review before merge. There is higher merge conflict risk for long development on branch.

A convention used by us: patch / branch name should contain noun from task summary and task id separated by a dash:

noun-task_id

for example: invoice-2351. This way we can easily find topic branches and precisely point to taks specification (by a task id).

Peer Code Review

Where's peer code review here? Three modes of commit, three review configurations follows:

- Direct commit: changeset is reviewed after commit on trunk (as I said lower risk encourages us to be flexible)

- Patch-based (read-only) changeset view: patch is sent to another team member for review by email or shared patches repository

- Classical "topic branch": read/write review: team member switches to topic branch and checks latest commits, he may also place comments in source code

Old, Good TODOs

What is a result of code review? The most efficient way to track problems found is to insert comments inside source code (in comments, near problematic code location). We prepared a convention of well-known TODO tags in source code: TODO:nick description. "nick" is abbreviation of comment recipient, description should be short and valuable. Here's the example:

/*

TODO:DCI VAT report summary should show taxes grouped by rates,

not the case now

*/

Recipient then scans all source code for such TODOs and fix problems found (thus removes TODO in the same commit). This way:

- we can connect problem report with problem fix in one changeset

- we can track who entered comment and who fixed it (from SVN history)

Summary

Code inspections were found to be very effective method of raising quality of software (the IBM story). I believe informal implementation called peer review plays very well with other agile tools we are using for developing better software.

Sat, 06 Feb 2010 21:56:52 +0000

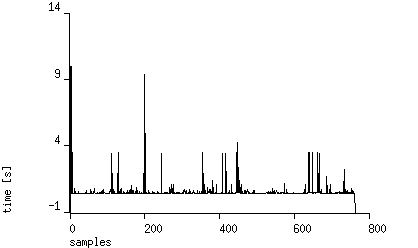

Twitter is a "free social networking and microblogging service that enables its users to send and read messages known as tweets". It's gaining popularity last months - userbase is growing rapidly. It's interesting to analyse Twitter's server infrastructure load by observing service response time over few days.

One week HTTP measurement

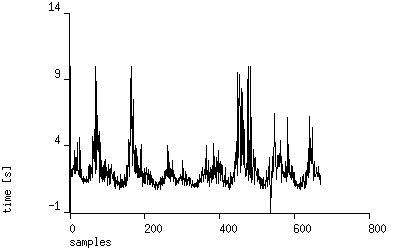

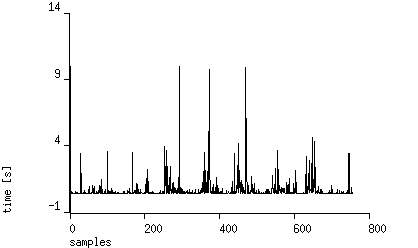

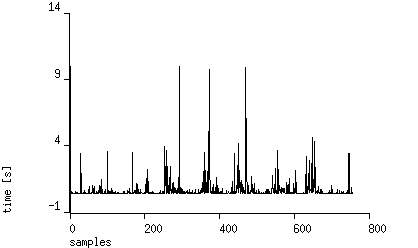

I'm using another service: site-uptime.net to record and analyse statistics from running Twitter website. Measurements are performed every 15 minutes using HEAD requests, total time of receiving response (excluding DNS resolution time) is collected. Here are the results showing data collected from eight days using station from Poland:

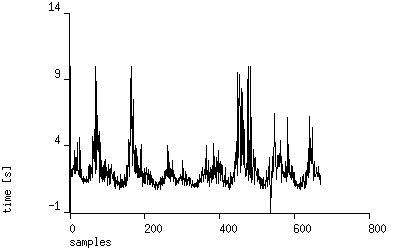

You can see time response spikes located every ~10 PM GMT +2. Are they related to server load or network? To check that we can see measurements retrieved using station from Chicago, USA:

The picture is quite different. Seems the traffic on network connection to Europe causes higher access time. Let's see if it has impact on speed of serving responses:

Not so bad. ~90% of requests are served under 1 second, it's very good result. Average response time is 650 ms, minimal: 370 ms . Note no downtimes in January - a good result.

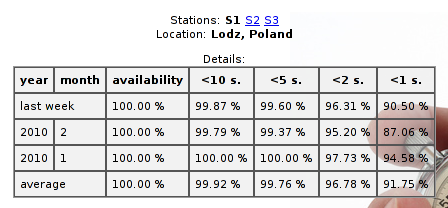

Where's Twitter located?

Let's see where twitter.com web server is located:

$ mtr twitter.com

(...)

6. te3-1.lonse1.London.opentransit.net 0.0% 27 48.2 48.8 47.8 49.8 0.5

7. xe-3-1.r01.londen03.uk.bb.gin.ntt.net 0.0% 27 48.6 96.8 48.1 244.9 67.3

8. ae-1.r22.londen03.uk.bb.gin.ntt.net 0.0% 27 50.3 50.9 48.9 72.3 4.6

9. as-0.r20.nycmny01.us.bb.gin.ntt.net 0.0% 27 131.7 129.7 127.7 132.7 1.4

10. ae-0.r21.nycmny01.us.bb.gin.ntt.net 0.0% 27 124.1 125.3 122.6 136.4 2.8

11. as-0.r20.chcgil09.us.bb.gin.ntt.net 0.0% 27 141.4 146.0 139.8 172.6 9.1

12. ae-0.r21.chcgil09.us.bb.gin.ntt.net 0.0% 27 144.7 147.4 143.7 181.2 7.7

13. as-5.r20.snjsca04.us.bb.gin.ntt.net 0.0% 27 199.7 210.2 199.7 281.7 18.4

14. xe-1-1-0.r20.mlpsca01.us.bb.gin.ntt.net 0.0% 27 203.7 204.2 198.9 242.5 8.0

15. mg-1.c20.mlpsca01.us.da.verio.net 0.0% 26 200.1 200.2 195.2 207.2 2.5

16. 128.241.122.117 0.0% 26 196.7 197.6 193.1 209.1 2.7

17. 168.143.162.5 0.0% 26 201.7 226.6 196.2 278.5 28.2

18. 168.143.162.36 0.0% 26 196.4 212.4 195.8 261.2 21.0

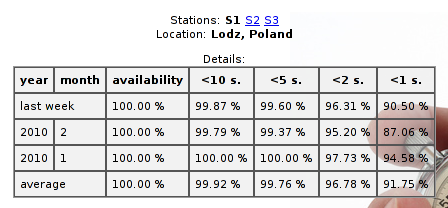

Far from Europe (200 ms). geoiptool service will show more details:

Servers are located in Colorado. The best times I can get from USA is ~110 ms (tested from Newark). Seems Europe Twitter users are in worse position, but the kind of service (short messages, easily compressable text content) works fine with such packet delays. Servers are located in Colorado. The best times I can get from USA is ~110 ms (tested from Newark). Seems Europe Twitter users are in worse position, but the kind of service (short messages, easily compressable text content) works fine with such packet delays.

About Measurement Service

site-uptime.net allows you to measure any website and notify you on downtimes. You can check how your website is visible for customers 24/7 and are able to track any problems (even if they appear out of your local business hours).

Sat, 06 Feb 2010 14:12:46 +0000

During software development (especially done in agile way) there are often time when working software release must be prepared for customer evaluation of internal testing. I found many software release managers use a feature called "commit freeze": no one can commit to main branch of development (trunk/master) until release is packaged. I doubt if it is really required. During software development (especially done in agile way) there are often time when working software release must be prepared for customer evaluation of internal testing. I found many software release managers use a feature called "commit freeze": no one can commit to main branch of development (trunk/master) until release is packaged. I doubt if it is really required.

The possible reason for freezing commits:

Creating releases

If you want to make minor changes related to release and block any other (probably more risky) changes to be accidentially introduced you needn't freeze commits. The more efficient solution here is to fork a branch. On separate branch you can do any justification you need to build the binaries for release.

Merging

For time-consuming merges (especially when many conflicts are present) it's tempting to prevent commits on target branch to minimize problems related to local development during merged changes commit. I think merging person should perform frequent updates insted from current branch and match merged changes to current trunk state.

Switch version control software / repositories

Switching between version control system is a big change in development team. One has to learn new toolset to operate efficiently with new version control system. Postponing commits on old repository is not required. Those changes could be reapplied later by creating patch from missing changesets and applying them on new repository. Patch format is a standard that allow to move changesets beteen different repositories.

Summary

In my opinion temporary blocking commits (so called "commit freeze") is not a good idea. Agile methodology (the one we use at Aplikacja.info) requires frequent information sharing. There are alternatives that have lower impact on development and not get in the way for normal code flow.

|

Tags

|

Linode.com

Linode.com

Linode uses developed in house, powerful VPS panel that allows:

Linode uses developed in house, powerful VPS panel that allows:

Przerwa zapewne jest planowa, oczywiście obiecuję trzymać kciuki za obsługę portalu ;-)

Przerwa zapewne jest planowa, oczywiście obiecuję trzymać kciuki za obsługę portalu ;-)

During software development (especially done in agile way) there are often time when working software release must be prepared for customer evaluation of internal testing. I found many software release managers use a feature called "commit freeze": no one can commit to main branch of development (trunk/master) until release is packaged. I doubt if it is really required.

During software development (especially done in agile way) there are often time when working software release must be prepared for customer evaluation of internal testing. I found many software release managers use a feature called "commit freeze": no one can commit to main branch of development (trunk/master) until release is packaged. I doubt if it is really required.