About This Site

Software development stuff

Archive

- 2017

- 2016

- 2015

- 2014

- 2013

- 2012

- 2011

- 2010

- 2009

- 2008

- 2007

|

Entries tagged "java".

Thu, 22 Feb 2007 21:27:57 +0000

J2EE to w uproszczeniu zbiór technologii opartych o Javę służących do tworzenia aplikacji dostępnych przez sieć. Elastyczność i możliwość konfiguracji, oparta w głównej mierze na plikach XML, prowadzi do tego, że coraz więcej błędów znajduje się poza kontrolą kompilatora.

W systemach starszego typu większość konfiguracji interakcji pomiędzy poszczególnymi fragmentami systemu była opisana w kodzie i jako taka mogła byś sprawdzona (przynajmniej częściowo) w fazie kompilacji. Tendencja wyrzucenia części informacji poza kod Javy powoduje niestety, że uzyskując elastyczność i pozorną łatwość wprowadzania zmian wypychamy błędy do fazy uruchomienia (run time).

Złożoność architektury J2EE powoduje, że cykl pracy (rekompilacja, instalacja, testowanie w przeglądarce) jest długi. Utrudnia to znacznie usuwanie błędów metodą "popraw i przeklikaj". Co można zrobić aby przyspieszyć proces usuwania błędów w J2EE?

Weryfikacja Statyczna

Większości z nas weryfikacja statyczna oprogramowania kojarzy się z wspomaganymi komputerowo systemami dowodzenia poprawności oprogramowania. Idea jest taka, by bez uruchamiania programu wychwycić wszystkie błędy, które mogą wystąpić w systemie. Dzięki temu (teoretycznie) faza testowania tylko potwierdza, że program jest napisany prawidłowo. Jednak kryje się w tym pomyśle kilka poważnych trudności z którymi jak do tej pory nie udało się informatyce uporać:

- W praktyce napisanie formalnej specyfikacji funkcjonalności jest trudne i pracochłonne

- Narzędzia służące do weryfikacji są trudne w użyciu i wymagają długiej praktyki przez produktywnym wykorzystaniem

- Nie zawsze da się automatycznie sprawdzić formalną specyfikację względem programu automatycznie (wymagana jest interwencja człowieka)

Jak widzimy pełna weryfikacja statyczna nie jest możliwa do zastosowania w typowych systemach. Czy jednak niektórych cech systemu nie możemy sprawdzić statycznie?

Zasoby w J2EE

Przez pojęcie zasób na potrzeby niniejszego artykułu rozumiem elementy aplikacji J2EE, które można niezależnie analizować, a które są wiązane ze sobą po instalacji w serwerze aplikacyjnym. Jednym z zasobów jest np. kod Javy, analiza jest przeprowadzana przez kompilator podczas kompilacji. Poniżej wymieniam kilka wybranych "zasobów":

- Kod Javy (możliwy dostęp: wyrażenia regularne, mechanizm refleksji)

- Konfiguracja Struts (możliwy dostęp: parser SAX)

- Pliki JSP (możliwy dostęp: parser SGML)

- Konfiguracja Tiles (możliwy dostęp: parser SAX)

- Tłumaczenie w ApplicationResources_*.properties (biblioteka standardowa Javy)

- ...

Jakie korzyści możemy osiągnąć zajmując się statycznie powyższymi zasobami? Najlepiej będzie to wyjaśnić opisując typowe błędy w aplikacji J2EE, które są zwykle odkrywane dopiero na etapie uruchomienia w serwerze aplikacyjnym.

- Wyjątek na stronie spowodowany użyciem tagu html:text z wartością atrybutu property, które nie istnieje jako atrybut w form beanie

- Literówka w atrybucie action w tagu html:form, która powoduje błąd braku strony pod danym URL

- Brak tłumaczenia dla tekstu statycznego występującego na stronie w tagu bean:message

- Użycie nieprawidłowego atrybutu name w którymś ze strutsowych tagów powoduje wyjątek

Odnajdywanie tego typu błędów, zwłaszcza kiedy projekt podlega ciągłym modyfikacjom, jest zajęciem mało efektywnym i męczącym. Czy nie prościej było by dostawać pełną listę tego typu błędów w ciągu kilku sekund zamiast tracić czas testerów na takie oczywiste defekty?

Uważam, że praca testera powinna polegać na sprawdzaniu zgodności ze specyfikacją i błędy spowodowane literówkami już nie powinny pojawiać się w tej fazie. W jaki sposób możemy statycznie "wyłuskiwać" klasy błędów opisane powyżej?

- Test jednostkowy, który na podstawie tagu html:text (parser SGML-a) znajdzie form beana użytego w danym tagu i sprawdzi, czy w tym beanie istnieje atrybut określony przez property

- Poprawność użytych atrybutów action można sprawdzić porównując je ze zdefiniowanymi akcjami w pliku konfiguracyjnym Struts

- Dla każdego wystąpienia bean:message sprawdzić, czy istnieje wpis w pliku z tłumaczeniami

- Zły name oznacza odwoływanie się do beana pod nieistniejącą nazwą

Implementacje

Udało mi się zastosować powyższe techniki dla następujących konfiguracji J2EE:

- JSF: parsowanie Java i JSP w oparciu o wyrażenia regularne, parser i weryfikator w języku AWK

- Struts: parser SGML do JSP, Beany Javy dostępne poprzez mechanizm refleksji

- Hibernate: statyczna analiza parametrów zapytania w HQL-u względem beana, który zawiera parametry

Fri, 11 Sep 2009 09:27:58 +0000

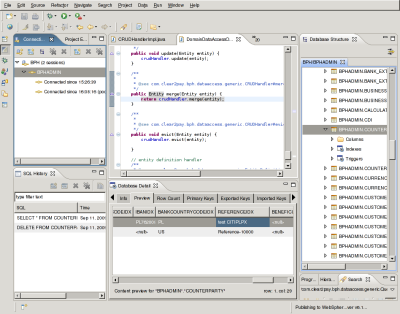

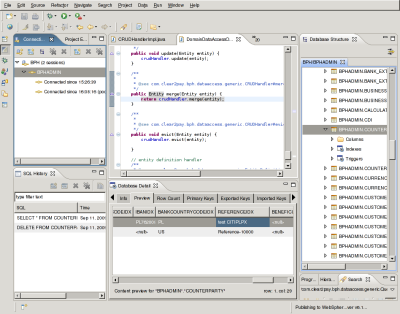

Eclipse SQL Explorer is simple but powerfull tool to inspect Your database state. You can:

- exec SQL commands

- browse database objects in Eclipse (including existing indices)

- open multiple databases at once

Installation is pretty simple:

- Download preferred version (select any sqlexplorer_plugin-*.zip file)

- Unpack this ZIP to your Eclipse directory: unzip sqlexplorer_plugin-*.zip

- Restart Eclipse

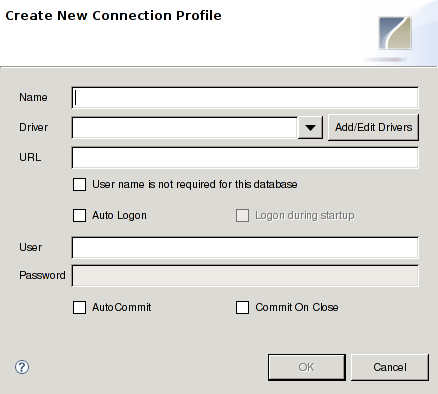

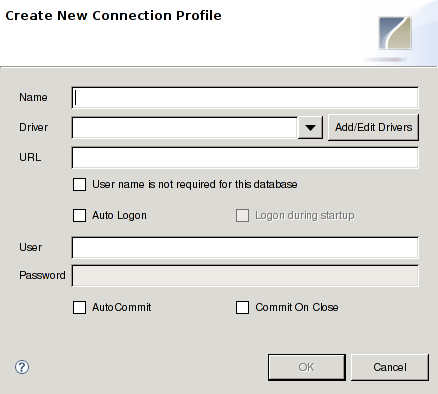

Usage: switch to SQLExplorer perspective and define new connection:

If needed driver is not on the list you have to configure new driver by selecting JDBC jar file from Your disk.

By default the following views are present in SQL Explorer perspective:

- Connection list

- SQL commands history

- SQL editor window

- Database details (table structure, indices are here)

- Database structure (list of tables, views etc.)

Enjoy!

Wed, 16 Sep 2009 09:21:01 +0000

<link>http://blog.aplikacja.info/blog/2009/09/16/monitor-heap-size-in-eclipse/</link>

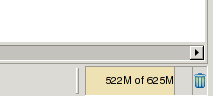

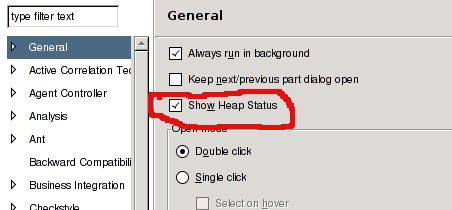

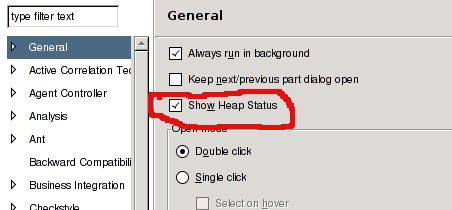

A very useful feature introduced lately in Eclipse IDE is presentation of current environment heap size. You can check how your Eclipse usage changes memory used and this way you can optimize some processes. Monitor sits in right bottom corner of main window and additionally you have the possibility to manually run garbage collector (trash icon):

You can enable it on General Preference page:

Wed, 16 Sep 2009 20:34:32 +0000

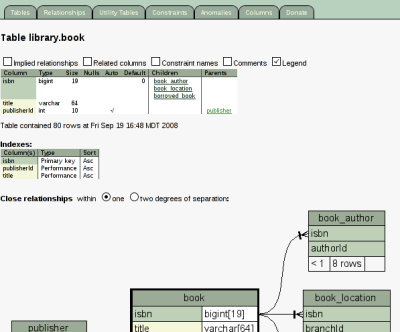

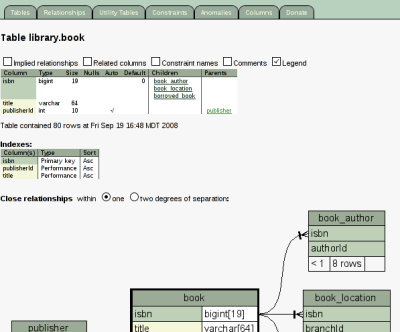

When you are joining to an existing project you don't have good knowledge of internal project structures. Existing documentation may be inaccurate or just missing. In this case you can rely only on clean source code structure and source code comments. Based on them you can generate API docs using Javadoc or Pydoc for instance. But what with persistent data model stored in database? SchemaSpy comes with help here!

SchemaSpy is small (~200kB) Java application that is able to render schema retrieved from JDBC connection into set of "hyperlinked" HTML pages with Java Script and (if GraphViz installed) diagrams. Below you see one page of example output of this tool:

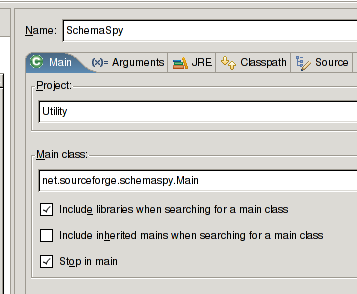

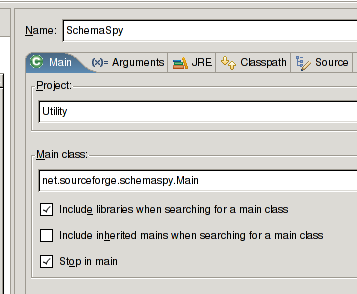

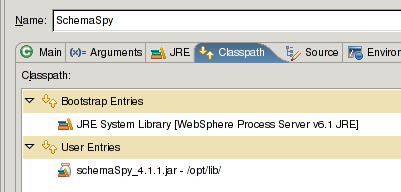

Installation is very simple. I'll describe integration process with Eclipse. First: download jar with application. Then create new Run configuration in Eclipse:

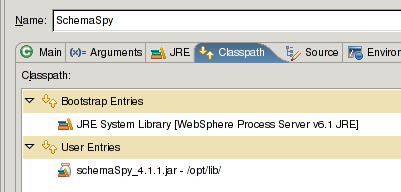

Add downloaded jar to classpath:

Then fill execution program arguments. My preferred list is as follows: Then fill execution program arguments. My preferred list is as follows:

-t orathin -db XE -u <db-user> -p <db-password> -o /opt/c2p/schema-docs -dp /opt/lib/ojdbc14.jar -host localhost -port 1521 -schema <db-schema> -noimplied

"orathin" option is database type and ojdbc14.jar contains JDBC driver for this connection type. "-noimplied" option will make documentation generation faster and saves some disk space for big projects.

The only missing feature for me is to disable rendering of attributes on diagrams (some association-rich tables gives very big images that could kill Firefox browser). It's an open source so I hope to fix this soon and of course release a path :-)

Thu, 01 Oct 2009 12:23:47 +0000

When opening JSP file in visual mode in Eclipse (Websphere Integrated Developer 6.1.2) I'm getting the following error (under Debian Lenny):

Caused by: java.lang.UnsatisfiedLinkError: /opt/IBM/WID61/configuration/org.eclipse.osgi/bundles/2374/1/.cp/libswt-mozilla-gtk-3236.so (libxpcom.so: cannot open shared object file: No such file or directory)

I checked for shared library dependicies:

$ ldd /opt/IBM/WID61/configuration/org.eclipse.osgi/bundles/2374/1/.cp/libswt-mozilla-gtk-3236.so

linux-gate.so.1 => (0xb7fa9000)

libxpcom.so => not found

libnspr4.so => /usr/lib/libnspr4.so (0xb7f4a000)

libplds4.so => /usr/lib/libplds4.so (0xb7f46000)

libplc4.so => /usr/lib/libplc4.so (0xb7f42000)

libstdc++.so.6 => /usr/lib/libstdc++.so.6 (0xb7e54000)

libm.so.6 => /lib/i686/cmov/libm.so.6 (0xb7e2e000)

libgcc_s.so.1 => /lib/libgcc_s.so.1 (0xb7e21000)

libc.so.6 => /lib/i686/cmov/libc.so.6 (0xb7cc6000)

libpthread.so.0 => /lib/i686/cmov/libpthread.so.0 (0xb7cac000)

libdl.so.2 => /lib/i686/cmov/libdl.so.2 (0xb7ca8000)

/lib/ld-linux.so.2 (0xb7faa000)

and checked ldconfig:

$ sudo ldconfig -v | grep libxpcom

libxpcom.so.0d -> libxpcom.so.0d

libxpcomglue.so.0d -> libxpcomglue.so.0d

library is installed in system:

$ dpkg -S libxpcom.so

libxul0d: /usr/lib/xulrunner/libxpcom.so

libxul0d: /usr/lib/libxpcom.so.0d

xulrunner-1.9: /usr/lib/xulrunner-1.9/libxpcom.so

icedove: /usr/lib/icedove/libxpcom.so

I linked the missing libraries:

cd /usr/lib

sudo ln -s libxpcom.so.0d libxpcom.so

sudo ln -s libxpcomglue.so.0d libxpcomglue.so

That's all!

Tue, 06 Oct 2009 10:11:33 +0000

I assume you're a developer and want to control global log level you are getting on your console window in Eclipse. Just log level. You don't want to learn all Log4J machinery to create many log files, customize logging format etc. I'll describe simplest steps to achieve this.

First, create commons-logging.properties file in your src/ directory (or directory on your classpath):

org.apache.commons.logging.Log=org.apache.commons.logging.impl.SimpleLog

Next, create simplelog.properties in the same location:

org.apache.commons.logging.simplelog.defaultlog=warn

org.apache.commons.logging.simplelog.showlogname=false

org.apache.commons.logging.simplelog.showShortLogname=false

org.apache.commons.logging.simplelog.showdatetime=false

That's all! Your app will now log on WARN level and above in short, one-line format. Isn't it simple?

If you want to give different log levels (info for instance) to different packeges:

org.apache.commons.logging.simplelog.log.<package prefix>=info

Thu, 26 Nov 2009 08:57:15 +0000

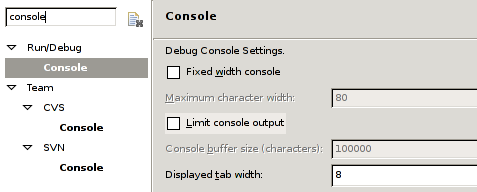

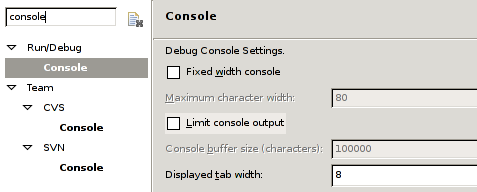

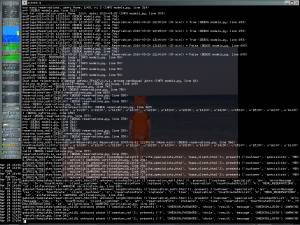

Sometimes you see that Eclipse stops responding and causes 100% of CPU usage (UI controls not redrawn). The only action can be taken then is to kill eclipse process.

I discovered that it's caused by big console output option. Test suite with low level (DEBUG) messages are able to kill Eclipse IDE, after setting log level to WARN no such problems are present.

You can also disable Limiting console output. It helped when no verbosity could be changed by logging configuration.

Wed, 24 Mar 2010 14:31:27 +0000

I bet everyone knows how to enable SQL logging for Hibernate. If you add this parametr to Hibernate configuration:

<property name="hibernate.show_sql">true</property>

you will see queries like this in log file:

select roles0_.EntityKey as Entity9_1_, roles0_.ENTITYKEY as ENTITY9_168_0_

from USERROLE roles0_

where roles0_.EntityKey=?

but wait: what value is passed as parameter by Hibernate? (parameters are marked as "?" in JDBC) You have configure TRACE level for some log categories, here's example for simplelog.properties:

org.apache.commons.logging.simplelog.log.org.hibernate.SQL=trace

org.apache.commons.logging.simplelog.log.org.hibernate.engine.query=trace

org.apache.commons.logging.simplelog.log.org.hibernate.type=trace

org.apache.commons.logging.simplelog.log.org.hibernate.jdbc=trace

For your convenience here's log4j configuration:

log4j.logger.org.hibernate.SQL=trace

log4j.logger.org.hibernate.engine.query=trace

log4j.logger.org.hibernate.type=trace

log4j.logger.org.hibernate.jdbc=trace

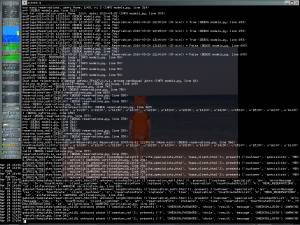

Then you will see all passed parameters and results in logs:

[DEBUG] (generated SQL here with parameter placeholders)

[TRACE] preparing statement

[TRACE] binding '1002' to parameter: 1

[TRACE] binding '1002' to parameter: 2

[TRACE] binding '1002' to parameter: 3

[DEBUG] about to open ResultSet (open ResultSets: 0, globally: 0)

[DEBUG] about to close ResultSet (open ResultSets: 1, globally: 1)

[DEBUG] about to close PreparedStatement (open PreparedStatements: 1, globally: 1)

[TRACE] closing statement

Nothing hidden here. Happy debugging!

Thu, 25 Mar 2010 09:58:10 +0000

Generics are very useful Java language feature introduced in Java 1.5. Starting from 1.5 you can statically declare expected types of objects inside collections and compiler will enforce this assumption during compilation:

Map<String, BankAccount> bankAccounts = new HashMap<String, BankAccount>();

bankAccounts.put("a1", bankAccount);

bankAccounts.add("a2", "string"); <-- compilation error

Integer x = bankAccounts.get("a1"); <-- compilation error

bankAccounts.put(new Integer("11"), bankAccount); <-- compilation error

Many projects, however, keep 1.4 compatibility mode for many reasons. I think 1.5 is mature enough (ok, let's say that: old) so it may be used safely.

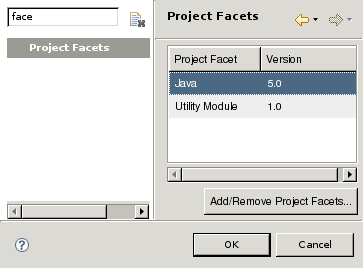

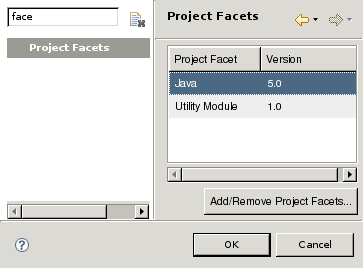

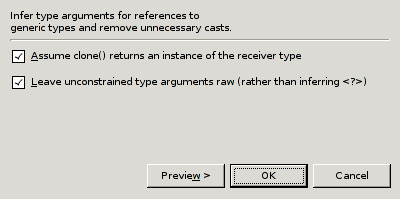

Recently I've started migration from big 1.4 project into 1.5 (in order to introduce generics). I changed Java project compliance level and got the following error:

Java compiler level does not match the version of the installed Java project facet

Quick googling for this error showed the two possible solutions:

- Right mouse click on project / Properties / Projects Facets / change Java version

- OR: right click on error in Problems view / Select Quick Fix / Choose 1.5 compiler level

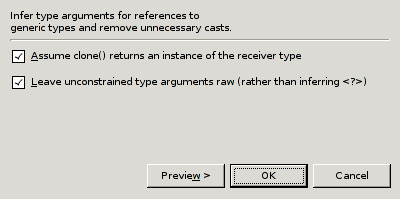

After this fix workspace compiles without errors. Generics can be added now. Eclipse supports this refactoring very well: just select: "Refactor / Infer Generic Type Arguments" and your current file will be filled with generic arguments. Very nice!

And the best at the end: you can select whole project for generic type update - Eclipse will insert generics where possible. It will speed up refactoring greatly!

Wed, 14 Apr 2010 08:51:24 +0000

Second level cache in Hibernate allows to greatly speed-up your application by minimizing number of SQL queries issued and serving some results from in-memory cache (with optional disk storage or distributed cache). You have option to plug in different cache libraries (or to Bring Your Own Cache - an approach popular among my colleagues from India ;-) ). There are caching limits you must be aware of when implementing 2nd level cache.

ID-based entity lookup cache

Caching entity-based lookup by id is very straightforward:

<session-factory>

<property name="cache.provider_class">

com.opensymphony.oscache.hibernate.OSCacheProvider

</property>

(...)

</session-factory>

<class name="EntityClass" table="ENTITY_TABLE">

<cache usage="read-only" />

(...)

</class>

Since then selecting EntityClass by id will use cache (BTW. remember to set cache expiration policy if entity is mutable!). But querying but other entity attributes will not cache the results.

Query cache

Here so-called "query cache" jumps in:

<session-factory>

<property name="cache.use_query_cache">true</property>

(...)

</session-factory>

Query query = new Query(EntityClass.class);

(...)

query.setCacheable(true);

query.list();

and voila! Queries issued (with parameters) are indices for cached results. The very important note is the value stored in cache. Entity keys and types are stored. Why is it important? Because it complicates caching SQL queries.

Caching SQL queries

Query cache requires query result to be Hibernate-known entity because the reason I mentioned above. That disallow to cache the following construct:

<!-- Map the resultset on a map. -->

<class name="com.comapny.MapDTO" entity-name="EntityMap">

<id name="entityKey" type="java.math.BigDecimal" column="ID" length="38"

access="com.company.MapPropertyAccessor" />

<property name="achLimit" type="java.lang.String" length="256" access="com.company.MapPropertyAccessor" />

</class>

<!-- alias is used in query -->

<resultset name="EntityMapList">

<return alias="list" entity-name="EntityMap" />

</resultset>

<sql-query name="NamedQueryName" resultset-ref="EntityMapList">

SELECT (...) AS {list.entityKey}

(...)

FROM TABLE_NAME

WHERE

(...)

</sql-query>

We will get the error:

Error: could not load an entity: EntityMap#1028

ORA-00942: table or view does not exist

EntityMap cannot be loaded separately by Hibernate because it's a DTO (data transfer object), not the entity. How to fix this error? Just change result of named query from DTO to corresponding entity. Then entity retrieved will be cached properly by Hibernate.

Thu, 22 Apr 2010 09:02:40 +0000

WebSphere uses SQL databases for internal managment of MQ queues (Derby database engine under the covers). Sometimes you need to reset their state. Here's the script that erase and recreate BPEDB database state (tested under WS 6.1.2):

rm -rf $WID_HOME/pf/wps/databases/BPEDB

echo "CONNECT 'jdbc:derby:$WID_HOME/pf/wps/databases/BPEDB;create=true' AS BPEDB;"|\

$WID_HOME/runtimes/bi_v61/derby/bin/embedded/ij.sh /dev/stdin

Thu, 29 Apr 2010 20:27:48 +0000

Recently I was asked to integrate WXS (Websphere Extreme Scale, commercial cache implementation from IBM) into existing WPS (Websphere Process Server)-based project to implement read-only non-distributed cache (one independent cache instance per JVM). The idea is to plug cache implementation using 2nd level cache interfaces defined by Hibernate. Recently I was asked to integrate WXS (Websphere Extreme Scale, commercial cache implementation from IBM) into existing WPS (Websphere Process Server)-based project to implement read-only non-distributed cache (one independent cache instance per JVM). The idea is to plug cache implementation using 2nd level cache interfaces defined by Hibernate.

I plugged in the objectgrid.jar into hibernate configuration:

<property name="cache.provider_class">

com.ibm.websphere.objectgrid.hibernate.cache.ObjectGridHibernateCacheProvider

</property>

then saw the following exception during Hibernate initialisation:

[4/29/10 10:08:34:462 CEST] 00000069 RegisteredSyn E WTRN0074E:

Exception caught from after_completion synchronization operation:

java.lang.NoSuchMethodError:

org/hibernate/cache/CacheException.<init>(Ljava/lang/Throwable; )V

at com.ibm.ws.objectgrid.hibernate.cache.ObjectGridHibernateCache.getSession(

ObjectGridHibernateCache.java:149)

at com.ibm.ws.objectgrid.hibernate.cache.ObjectGridHibernateCache.put(

ObjectGridHibernateCache.java:385)

at org.hibernate.cache.UpdateTimestampsCache.invalidate(

UpdateTimestampsCache.java:67)

WXS expects CacheException(java.lang.Throwable) constructor, Hibernate API supports this constructor since version 3.2. Version 3.1 currently used in project doesn't support this constructor (only CacheException(java.lang.Exception) is present). This issue forced Hibernate upgrade in the project. Note that no Hibernate version requirements found on official "requirements" page (huh?).

Conclusions:

- BIG BLUE is not a guarantee for high quality documentation

- Closed source is harder to implement (any problems put you in waiting support queue)

- "Big" != Good (IMHO), would you pay for read-only cache implementation $14,400.00 per PVU and deploy giant objectgrid.jar (15M) for this very purpose? Yikes!

I'm going to talk with project sponsor about better ways to spend money (my salary increase, maybe ;-) ). Seriously: free EHCache / OSCache are more than sufficient for the task. They are small and Open Source.

"Do not hire Godzilla to pull Santa's sleigh, reindeers are better for this purpose" - Bart Simpson.

Fri, 30 Apr 2010 14:27:39 +0000

Sometimes when you get an exception like this:

javax.naming.NameNotFoundException: Name "comp/UserTransaction" not found in context "java:"

you want to see what entries are visible in JNDI. No problem, place this code somewhere near lookup problem code location:

InitialContext ic = new InitialContext();

NamingEnumeration it = ic.list("java:comp");

System.out.println("JNDI entries:");

while (it.hasMore()) {

NameClassPair nc = it.next();

System.out.println("JNDI entry: " + nc.getName());

}

You will see all JNDI names availailable on console then (you can use your logging library instead of course).

Sat, 01 May 2010 21:55:44 +0000

During recent 2nd level cache implementation research I noticed EHCache has a very funny dependency: slf4j. Hey, WTH, yet another log library implementation? - I asked myself. No commons-logging as everywhere?

I googled around and found "The evils of commons-logging.jar and its ilk" article. It highlight some problems related to commons-logging usage:

- Different commons-logging versions in one project mirrors DLL-hell problem

- Collection logs from all sources into one stream has no bigger value for a developer

- Advanced configuration is logging back-end dependant (appenders for log4j for example), so unified layer is not valuable here

- Configuration is not intuitive and hard

I agree with 1, 3 and 4. 2 is questionable: sometimes logs sorted in one timeline allows for better error analysis.

slf4j is proposed as an alternative. It's more modular and (probably) simpler that commons-logging. All configuration is a matter of placing selected implementation (slf4j-jdk14-1.5.8.jar for instance) jar on classpath. And voila! - logging is done thru JDK 1.4 logging. Quite simple.

Wed, 19 May 2010 14:47:16 +0000

Hibernate is a library that maps database tables to Java objects. Is performance problems arise it's very easy to add database caching for application using Hibernate (just few options in config file). Hibernate is shipped with EHCache, default cache implementation. It works well and is easy to setup. Hibernate is a library that maps database tables to Java objects. Is performance problems arise it's very easy to add database caching for application using Hibernate (just few options in config file). Hibernate is shipped with EHCache, default cache implementation. It works well and is easy to setup.

Sometimes you have to use another caching library that has no direct support for Hibernate. Here the Hibernate API comes into play. I'll show you how to plug Websphere's DistributedMap into Hibernate.

First: you have to map get/put requests into desired API. org.hibernate.cache.Cache is an interface that must be subclassed for this task. This class instance is created by Hibernate for every entity that will be cached (distinguished by regionName).

public class WebsphereCacheImpl implements Cache {

private DistributedMap map;

private String regionName;

public WebsphereCacheImpl(DistributedMap map, String regionName) {

this.map = map;

this.regionName = regionName;

}

public void clear() throws CacheException {

map.clear();

}

public Object get(Object key) throws CacheException {

return map.get(getMapKey(key));

}

public String getRegionName() {

return regionName;

}

public void put(Object key, Object value) throws CacheException {

map.put(getMapKey(key), value);

}

public Object read(Object key) throws CacheException {

return map.get(getMapKey(key));

}

public void remove(Object key) throws CacheException {

map.remove(getMapKey(key));

}

public void update(Object key, Object value) throws CacheException {

map.put(getMapKey(key), value);

}

private String getMapKey(Object key) {

return regionName + "." + key;

}

(...)

}

Then you have to prepare factory for such obejcts:

public class WebsphereCacheProviderImpl implements CacheProvider {

private DistributedMap distributedMap;

public WebsphereCacheProviderImpl() throws NamingException {

InitialContext ic = new InitialContext();

distributedMap = (DistributedMap) ic.lookup("services/cache/cache1");

}

public Cache buildCache(String regionName, Properties arg1) throws CacheException {

return new WebsphereCacheImpl(distributedMap, regionName);

}

public boolean isMinimalPutsEnabledByDefault() {

return false;

}

public long nextTimestamp() {

return new Date().getTime();

}

public void start(Properties arg0) throws CacheException {

}

public void stop() {

}

}

Then new factory class must be registered in Hibernate configuration:

<property name="hibernate.cache.use_second_level_cache">true</property>

<property name="hibernate.cache.use_query_cache">true</property>

<property name="cache.provider_class">com.company.project.cache.WebsphereCacheProviderImpl</property>

And voila!

Thu, 27 May 2010 10:09:27 +0000

Sometimes you want to customize logging level for given package in your application (to see tracing details for example). If you're using commons-logging library the configuration file is called "commons-logging.properties" and it should be places somewhere on classpath.

org.apache.commons.logging.Log=org.apache.commons.logging.impl.SimpleLog

priority=1

Then we can configure SimpleLog back-end just declared in simplelog.properties:

org.apache.commons.logging.simplelog.defaultlog=warn

org.apache.commons.logging.simplelog.log.com.mycompany.package=debug

You place those two files on classpath, redeploy application and ... NOTHING HAPPENS. Those files aren't visible!

Why is it happening? Here's the explanation:

- Default WebSphere class-loader is defined as PARENT_FIRST, that means WebSphere classpath is being searched in first place

- WebSphere ships with commons-logging.properties that selects JDK 1.4 logging back-end

That's why your commons logging configuration is not visible.

How to fix that: place files inside /opt/IBM/WID61/pf/wps/properties directory (it's the first entry on the classpath). Then those files will become visible and log level customisation is possible then.

Wed, 23 Jun 2010 07:54:31 +0000

When you setup your project with commons-configuration library plugged in sometimes you can get very misleading error:

ConfigurationException: XML-22101: (Fatal Error) DOMSource node as this type not supported

This error may be caused by missing XML library bindings. In order to fix that error you have to configure properly some service providers (point JVM to correct XML parsing/transformation implementations).

How to do that? You have to create the following directory structure somewhere under classpath:

- META-INF

- META-INF/services

- META-INF/services/interface_name_files

Then inside "services" directory place filenames named after interfaces and filled with full class name of an implementation, for example (Xerces + Xalan plugged in):

- src/META-INF/services/javax.xml.parsers.DocumentBuilderFactory

- org.apache.xerces.jaxp.DocumentBuilderFactoryImpl

- src/META-INF/services/javax.xml.parsers.SAXParserFactory

- org.apache.xerces.jaxp.SAXParserFactoryImpl

- src/META-INF/services/javax.xml.transform.TransformerFactory

- org.apache.xalan.processor.TransformerFactoryImpl

After this change proper XML implementation should be used (if the jar is present on classpatch of course).

Mon, 02 Dec 2013 22:58:49 +0000

JPA stands for "Java Persistence API" - an API that tries to replace direct Hibernate (or other ORM) usage with more generic layer that allows to replace underlying ORM with no application code changes.

Although ORM (object relational mappers) typically use relational database under the hood JPA is not restricted to relational databases. I'll present how object database (objectdb) can be used using JPA interface.

All the source code can be located here: https://github.com/dcieslak/jee-samples/tree/master/jpa.

Classes

Firstly, you have to define your model and relations using POJO with JPA annotations. We try to make model as simple as possible, so no getters/setters pairs if not really required.

@Entity

class SystemUser implements Serializable {

@Id @Column @GeneratedValue(strategy=GenerationType.AUTO)

public Integer userId;

@Column

public String systemUserName;

@Column

public Departament department;

public String toString() {

return "SystemUser(" + systemUserName + ", " + department + ")";

}

}

A bit of explanation:

- @Entity: annotation marks class that will be mapped to persistent entity. If the name of corresponding table is the same as class name you don't have to specify it explicitly. It makes class description more compact

- @Id: marks given property to be primary identifier of a class. Uniqueness is assumed for the column.

- @GeneratedValue: primary key must be assigned an unique value when object is created. By this annotation you can select generation method for identifiers.

- @Column: a property of the persisted entity.

- Departament department: here we have relation many to one to Department class. It's implemented as foreign key pointing to Department table with index for speeding up access.

- toString(): in order to be able to print object description to the stdout I've redefined toString() method, it makes debugging much easier.

Department can be defined in a very similar way.

Setup

In order to use JPA (except classpath dependencies) you have to initialize the persistence engine. As stated above we will use objectdb implementation of JPA.

EntityManagerFactory factory = Persistence.createEntityManagerFactory(

"$objectdb/db/samplejpa.odb");

EntityManager em = factory.createEntityManager();

Here we will use database stored in db/samplejpa.odb file in the classpath where objectdb.jar is stored. Of course for production deployment client-server architecture will be more efficient, we leave file usage for sake of setup simplicity.

CRUD operations with JPA

CRUD operations look very familiar for anyone coming from Hibernate world:

em.getTransaction().begin();

System.out.println("Clearing database:");

em.createQuery("DELETE FROM SystemUser").executeUpdate();

em.createQuery("DELETE FROM Departament").executeUpdate();

System.out.println("Two objects created with 1-N association:");

Departament d = new Departament();

d.departamentName = "Dept1";

em.persist(d);

SystemUser u = new SystemUser();

u.systemUserName = "User1";

u.department = d;

em.persist(u);

System.out.println("Querying database:");

TypedQuery<Departament> dq = em.createQuery("SELECT D FROM Departament D", Departament.class);

for (Departament d2: dq.getResultList()) {

System.out.println("Loaded: " + d2);

}

TypedQuery<SystemUser> sq = em.createQuery("SELECT S FROM SystemUser S", SystemUser.class);

for (SystemUser s: sq.getResultList()) {

System.out.println("Loaded: " + s);

}

em.getTransaction().commit();

Some notes to above code:

- Every database updates set must be enclosed in a transaction (begin() / commit())

- TypedQuery<T> interface is a very nice extension to old-style Query interface that was not parametrized.

- persist(obj) is responsible for storing object in a database, JPA implementation discovers target table by reflection

- DB state mutable queries: executed by executeUpdate() call - sometimes can save much time when handling big database tables

Maven project

And finally: you have to build/execute all the example app. I've selected Maven as it makes collecting dependencies much easier (and you don't have to store jars in your source code repository).

<repositories>

<repository>

<id>objectdb</id>

<name>ObjectDB Repository</name>

<url>http://m2.objectdb.com</url>

</repository>

</repositories>

<dependencies>

<dependency>

<groupId>com.objectdb</groupId>

<artifactId>objectdb</artifactId>

<version>2.2.5</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.5</source>

<target>1.5</target>

</configuration>

</plugin>

<plugin>

<groupId>org.codehaus.mojo</groupId>

<artifactId>exec-maven-plugin</artifactId>

<version>1.2</version>

<executions>

<execution>

<phase>test</phase>

<goals>

<goal>java</goal>

</goals>

<configuration>

<mainClass>samplejpa.Main</mainClass>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

In order to build application execute: mvn test and the result of program run will be presented on screen.

Mon, 20 Oct 2014 21:26:38 +0000

Recently new vulnerability ("poodle") has been discovered in SSLv3 protocol. "man in the middle" attack could be performed using protocol version negotiation feature built into SSL/TLS to force the use of SSL 3.0 then exploit the "poodle" vulnerability. Recently new vulnerability ("poodle") has been discovered in SSLv3 protocol. "man in the middle" attack could be performed using protocol version negotiation feature built into SSL/TLS to force the use of SSL 3.0 then exploit the "poodle" vulnerability.

In order to remove the threat from our servers we have to drop SSLv3 from negotiation list. Secured server should respond as follows:

$ echo | openssl s_client -connect 192.168.1.100:80 -ssl3 2>&1 | grep Secure

Secure Renegotiation IS NOT supported

$ echo | openssl s_client -connect 192.168.1.100:80 -tls1 2>&1 | grep Secure

Secure Renegotiation IS supported

We use openssl command to open HTTPS connection and check if requested protocol could be negotiated or not.

And the fix itself (for JBoss/Tomcat service): you have to locate Connector tag responsilble for HTTPS connection and:

- remove any SSL_* from ciphers attribute

- limit sslProtocols="TLSv1, TLSv1.1, TLSv1.2"

Example:

<Connector port="80" protocol="HTTP/1.1" SSLEnabled="true" ciphers="TLS_RSA_WITH_AES_128_CBC_SHA, TLS_DHE_RSA_WITH_AES_128_CBC_SHA, TLS_DHE_DSS_WITH_AES_128_CBC_SHA"

maxThreads="100" scheme="https" secure="true" minSpareThreads="25" maxSpareThreads="50"

keystoreFile="${jboss.server.home.dir}/conf/tm.keystore" keystorePass="MyKeyStore1"

clientAuth="false" sslProtocols="TLSv1, TLSv1.1, TLSv1.2" />

It will effectively block any SSLv3 connections as visible by "openssl s_client" test above.

|

Tags

|

Then fill execution program arguments. My preferred list is as follows:

Then fill execution program arguments. My preferred list is as follows: