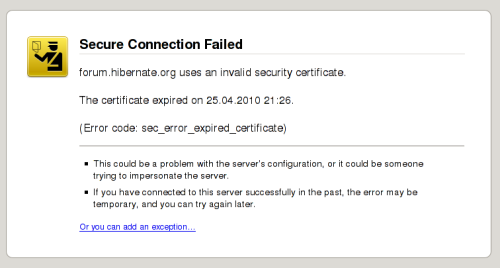

When your customer enters your website they do not want to make their passwords / credit card information to be visible for everyone (sniffing local network or one of routers in the way). That's why SSL (Secure Socket Layer) was born. Is simple words it wraps HTTP connection in a secure tunnel.

Another story is man-in-the-middle attack possibility or faking DNS servers response. You (as customer opening the webpage) should ensure that you are connecting to website you intended to (fake bank websites are big risk for your money, so it's important). That's why certification is closely bundled with connection encryption.

I'll show you how obtain and install SSL certificate under Lighttpd web server to make your website more trustworthy for your customers.

Fitrst, create directory structure that will make organisation easier:

# mkdir -p /etc/lighttpd/ssl/domain.com

# cd /etc/lighttpd/ssl/domain.com

Create server key (you will be prompted for a password) and CSR (Certificate Signing Request) that will be used for certification creation in one step:

# openssl req -newkey rsa:2048 -keyout domain.com.key -out domain.com.csr

Remove attached password (I do not want to have to pass the password on server restart):

# openssl rsa -in domain.com.key -out domain.com.nopass.key

Then, pass generated domain.com.csr to your SSL certificate provider. You will have to prove you own the domain (an email will be sent to root@domain.com with special URL). After succesfull verification certificate is created. Place (paste) this certificate inside /etc/lighttpd/ssl/domain.com.crt file.

Then you have to create pem file (not sure why it's organised that way):

# cat domain.com.nopass.key domain.com.crt > domain.com.pem

Then you have to tell Lighttpd to handle SSL traffic for given IP address and port:

$SERVER["socket"] == "IP-ADDRESS-HERE:443" {

ssl.engine = "enable"

ssl.pemfile = "/etc/lighttpd/ssl/domain.com/domain.com.pem"

ssl.ca-file = "/etc/lighttpd/ssl/domain.com/domain.com.crt"

}

First note: for SSL traffic you have to specify IP address, not domain name. SSL handshake is done BEFORE headers are sent to server, so name based virtual hosts are not possible (certificates must be checked first).

Second note: if you use the same domain for HTTP and HTTPS traffic don't have to specify server.document-root and other domain-related parameters. They will be borrowed from:

$HTTP["host"] = "domain.com" {

(...)

(plain HTTP) section.

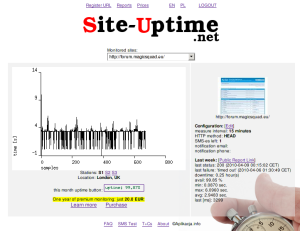

Now browser redirected to https://domain.com should show you your web-application without warnings.

Happy SSL-ing!