Creating software projects is composed of many activities. One of them (not very appreciated by typical developer) is building process. The activity should create executables or libraries from source code. You may say now: hey, you forget about documentation, generated API specification, installation, deployment, ...! As you can see there are many task realised by this activity.

Creating software projects is composed of many activities. One of them (not very appreciated by typical developer) is building process. The activity should create executables or libraries from source code. You may say now: hey, you forget about documentation, generated API specification, installation, deployment, ...! As you can see there are many task realised by this activity.

I'll review most popular building tools and will point out their strengths and weaknesses:

- Make

- Ant

- Maven

- IDE-based builders

Make

Make is the oldest tool mentioned here. Comes from UNIX world, is very popular among all non-java projects. Make uses "Makefile" text file with build specification. Here is sample Makefile that builds executable from C source code:

helloworld: helloworld.o

cc -o $@ $<

helloworld.o: helloworld.c

cc -c -o $@ $<

clean:

rm -f helloworld helloworld.o

General makefile syntax:

target: dependicies

<tab>command1

<tab>command2

...

You can chain dependencies and make will resolve them properly and build them in correct order.

Make is used for Linux kernel development and (with configure, imake, ... support) for many Unix based programs.

Make main benefits:

- compact syntax

- basic functionality is a standard among all implementations

- very popular among operating system distributions

Main disadvantages:

- Sometimes <tab> usage requirement may be hard for novices

- Syntax may be cryptic for beginners

Ant

Few quirks built into Make tool (TAB requirement for instance, portability problems, etc) caused Ant to be born. It's Java-based, XML-driven tool that is used mainly for building Java based projects.

<?xml version="1.0"?>

<project name="Hello" default="compile">

<target name="clean" description="remove intermediate files">

<delete dir="classes"/>

</target>

<target name="clobber" depends="clean" description="remove all artifact files">

<delete file="hello.jar"/>

</target>

<target name="compile" description="compile the Java source code to class files">

<mkdir dir="classes"/>

<javac srcdir="." destdir="classes"/>

</target>

<target name="jar" depends="compile" description="create a Jar file for the application">

<jar destfile="hello.jar">

<fileset dir="classes" includes="**/*.class"/>

<manifest>

<attribute name="Main-Class" value="HelloProgram"/>

</manifest>

</jar>

</target>

</project>

Ant tries to extend portability by supplying many built-in operations (make uses shell commands here).

Ant is still low-level tool. You can compose your build process any way you want (you can customize almost everything). The need for creating higher level of abstraction created next tool.

Maven

Maven uses "convention over configuration" philosophy. Configuration is also written in XML, but, unlike Ant, you tell Maven the "WHAT", not "HOW". Most parameters (source folders, target folders) have sensible defaults and you can (theoretically) start work on any Maven based project without surprises.

Main Maven benefits:

- standardised project structure

- binary libraries are stored outside source tree (you declare dependencies, libraries are downloaded on demand during build)

- support for multi-project builds with complicated dependencies

The main problem you can face is the distance from low-level build commands to user interface. Some details are not visible and you need big experience with Maven to diagnose properly problems that may occur during a build.

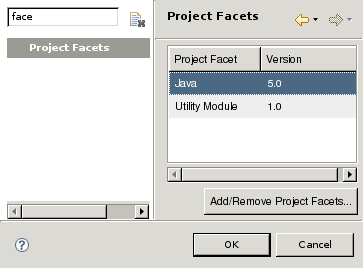

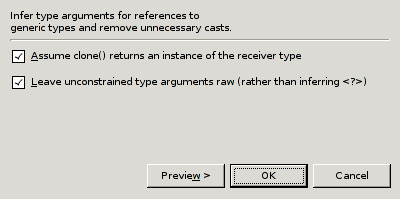

IDE-based builders

Very popular option for novice/Windows programmers. You can setup build configuration within your IDE (Integrated development Environment) and expect all executables/libraries be build.

Benefits:

- Easy to use

- Incremental builds

Drawbacks:

- Hard to connect to continuous integration tools

Every big project I was working on had IDE-based build duplicated with Maven/Ant based scripts. Why? Because Hudson/Cruise Control builds were created using scripting interface. You can use IDE builders as help during development (it's faster than script rebuilds).

What to choose?

It depends:

- Small project created on Unix machine: use Make

- Bigger project with custom build commands: use Ant

- Multi project build with complicated dependencies: use Maven

Good luck :-)

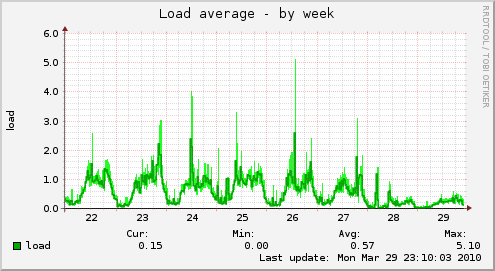

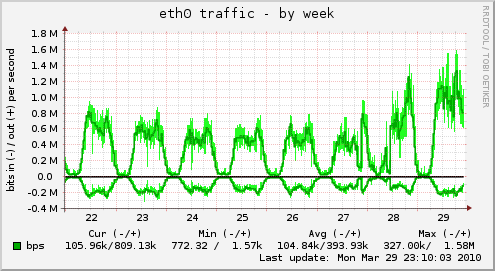

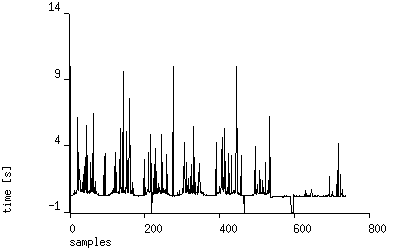

Now systems performs very well.

Now systems performs very well.